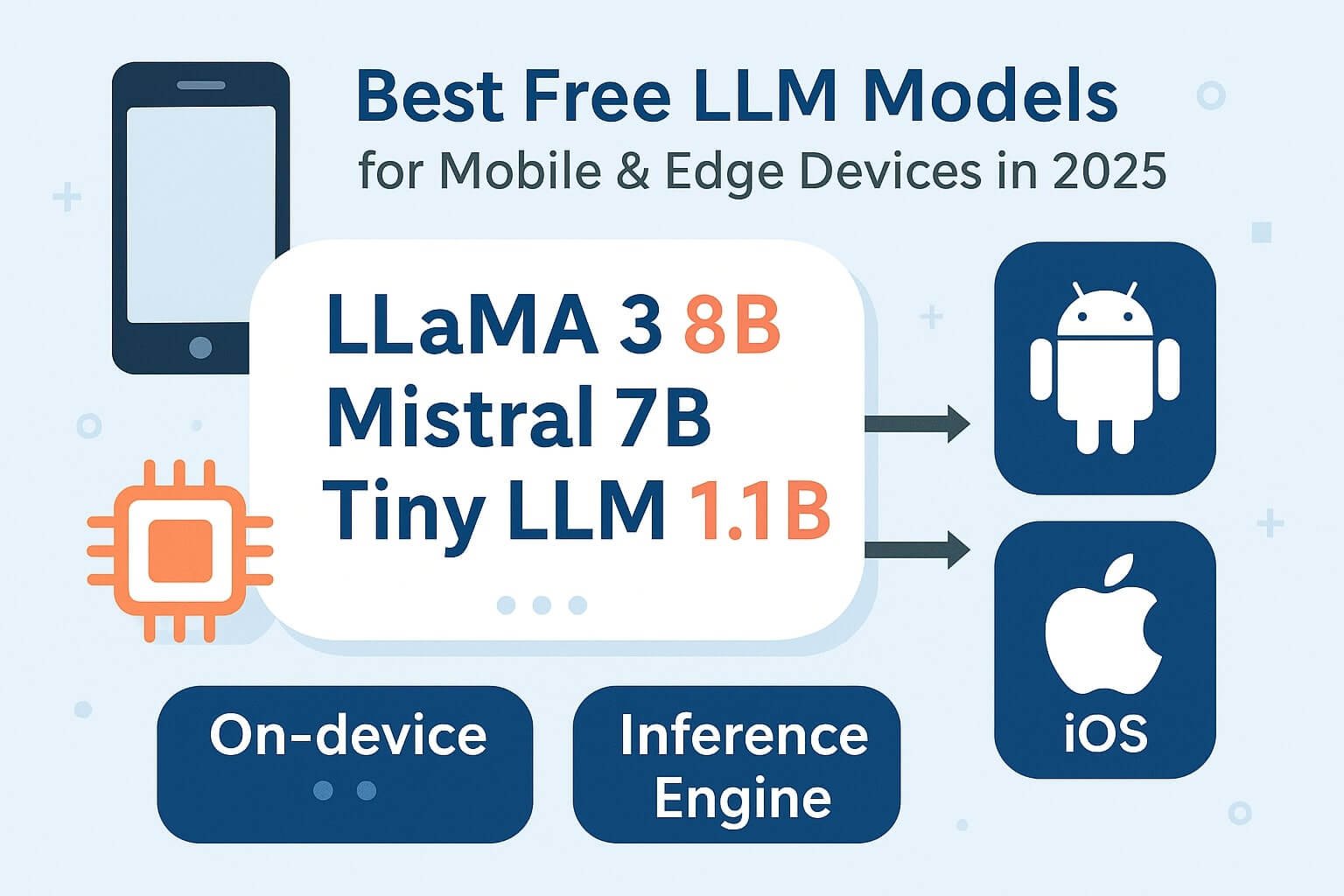

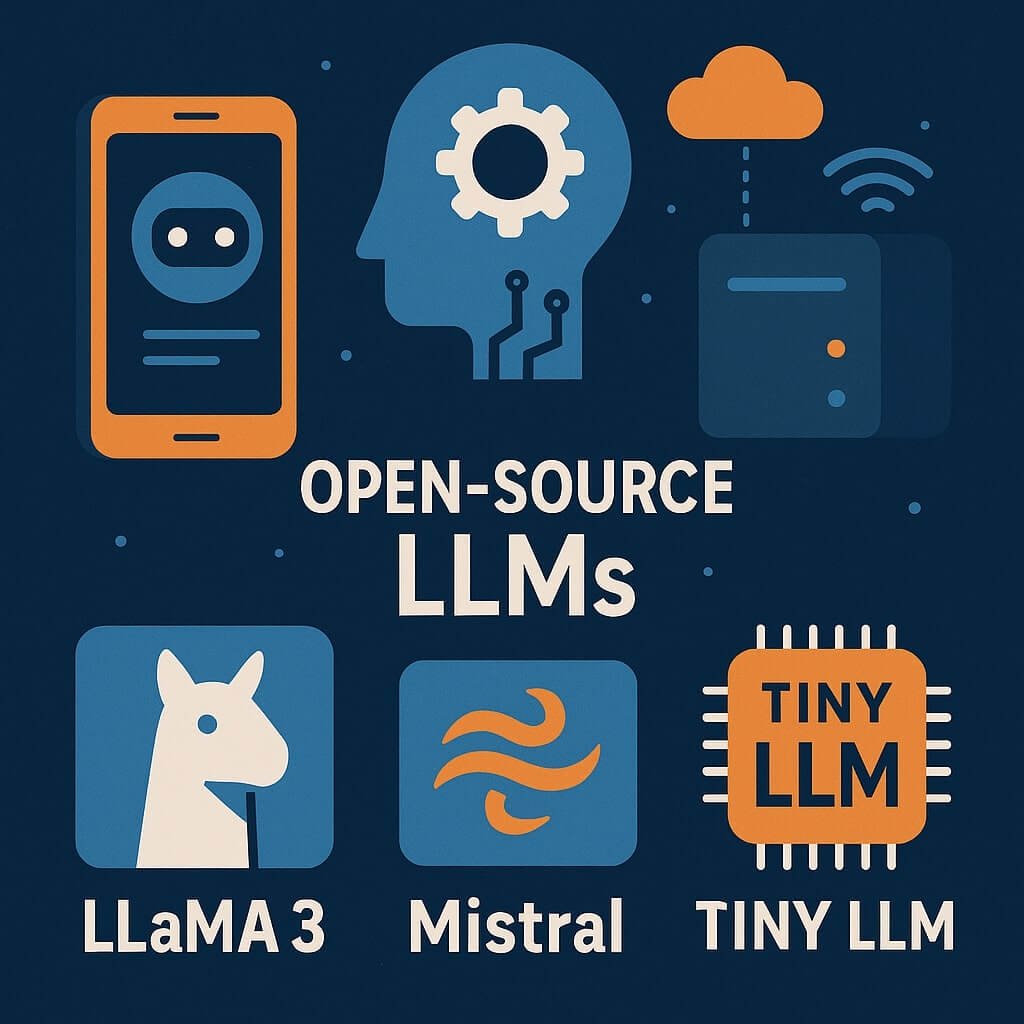

Large language models are no longer stuck in the cloud. In 2025, you can run powerful, open-source LLMs directly on mobile devices and edge chips — with no internet connection or vendor lock-in.

This post lists the best free and open LLMs available for real-time, on-device use. Each model supports inference on consumer-grade Android phones, iPhones, Raspberry Pi-like edge chips, and even laptops with modest GPUs.

📦 What Makes a Good Edge LLM?

- Size: ≤ 3B parameters is ideal for edge use

- Speed: inference latency under 300ms preferred

- Low memory usage: fits in < 6 GB RAM

- Compatibility: runs on CoreML, ONNX, or GGUF formats

- License: commercially friendly (Apache, MIT)

🔝 Top 10 Free LLMs for Mobile and Edge

1. Mistral 7B (Quantized)

Best mix of quality + size. GGUF-quantized versions like q4_K_M fit on modern Android with 6 GB RAM.

2. LLaMA 3 (8B, 4B)

Meta’s latest model. Quantized 4-bit versions run well on Apple Silicon with llama.cpp or CoreML.

3. Phi-2 (by Microsoft)

Compact 1.3B model tuned for reasoning. Excellent for chatbots and local summarizers on devices.

4. TinyLLaMA (1.1B)

Trained from scratch for mobile use. Works in < 2GB RAM and ideal for micro-agents.

5. Mistral Mini (2.7B, new)

Community-built variant of Mistral with aggressive quantization. < 300MB binary.

6. Gemma 2B (Google)

Fine-tuned model with fast decoding. Works with Gemini inference wrapper on Android.

7. Neural Chat (Intel 3B)

ONNX-optimized. Benchmarks well on NPU-equipped Android chips.

8. Falcon-RW 1.3B

Open license and fast decoding with llama.cpp backend.

9. Dolphin 2.2 (2B, uncensored)

Instruction-tuned for broad dialog tasks. Ideal for offline chatbots.

10. WizardCoder (1.5B)

Code generation LLM for local dev tools. Runs inside VS Code plugin with < 2GB RAM.

🧰 How to Run LLMs on Device

🟩 Android

- Use llama.cpp-android or llama-rs JNI wrappers

- Build AICore integration using Gemini Lite runner

- Quantize to GGUF format with tools like llama.cpp or llamafile

🍎 iOS / macOS

- Use CoreML conversion via `transformers-to-coreml` script

- Run in background thread with DispatchQueue

- Use CreateML or HuggingFace conversion pipelines

📊 Benchmark Snapshot (on-device)

| Model | RAM Used | Avg Latency | Output Speed |

|---|---|---|---|

| Mistral 7B q4 | 5.7 GB | 410ms | 9.3 tok/sec |

| Phiphi-2 | 2.1 GB | 120ms | 17.1 tok/sec |

| TinyLLaMA | 1.6 GB | 89ms | 21.2 tok/sec |

🔐 Offline Use Cases

- Medical apps (no server calls)

- Educational apps in rural/offline regions

- Travel planners on airplane mode

- Secure enterprise tools with no external telemetry

📂 Recommended Tools

- llama.cpp — C++ inference engine (Android, iOS, desktop)

- transformers.js — Web-based LLM runner

- GGUF Format — For quantized model sharing

- lmdeploy — Model deployment CLI for edge