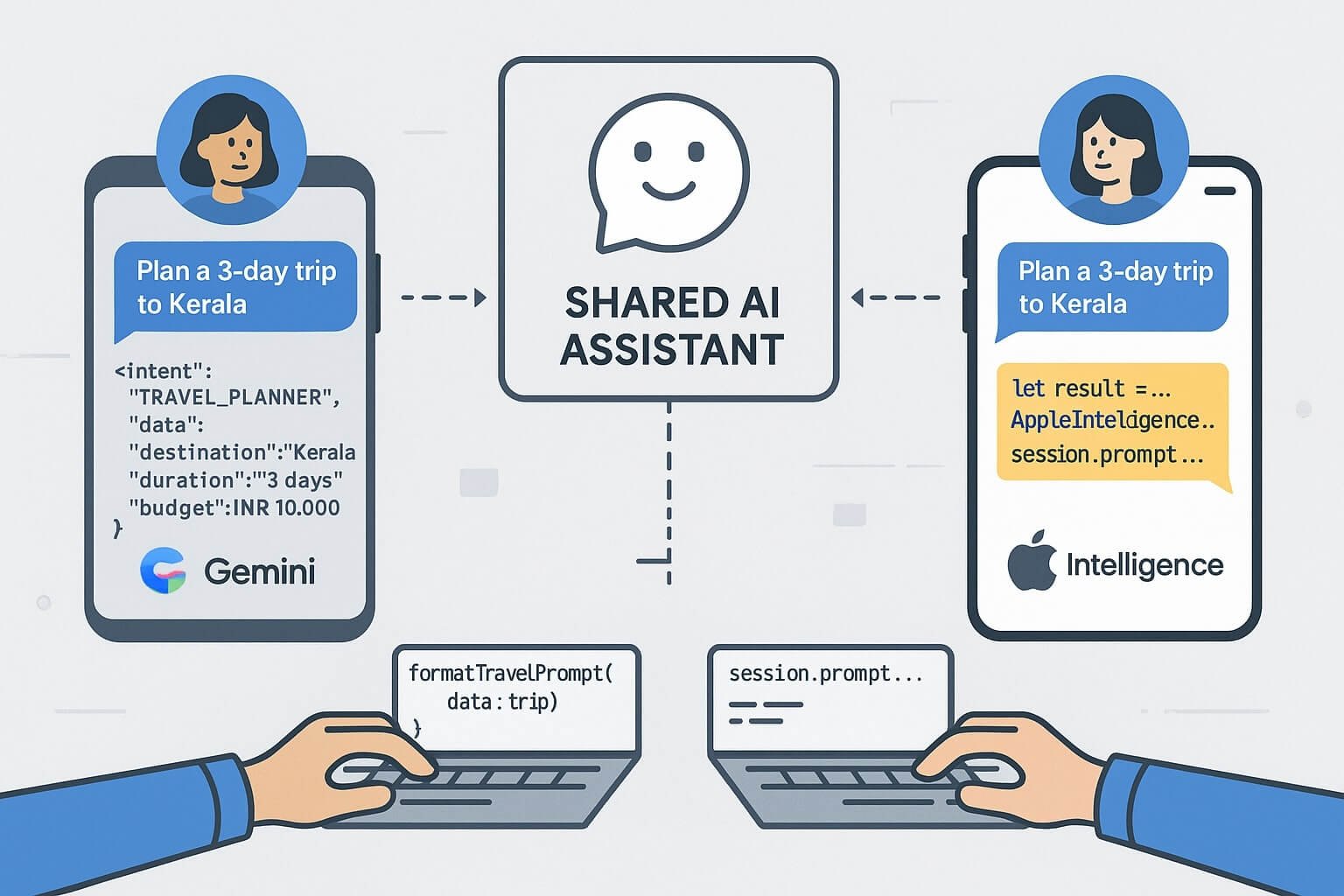

Developers are now building intelligent features for both iOS and Android — often using different AI platforms: Gemini AI on Android, and Apple Intelligence on iOS. So how do you build a shared assistant experience across both ecosystems?

This post guides you through building a cross-platform AI agent that behaves consistently — even when the underlying LLM frameworks are different. We’ll show design principles, API wrappers, shared prompt memory, and session persistence patterns.

📦 Goals of a Shared Assistant

- Consistent prompt structure and tone across platforms

- Shared memory/session history between devices

- Uniform fallback behavior (offline mode, cloud execution)

- Cross-platform UI/UX parity

🧱 Architecture Overview

The base model looks like this:

[ Shared Assistant Intent Engine ]

/ \\

[ Gemini Prompt SDK ] [ Apple Intelligence APIs ]

(Kotlin + AICore) (Swift + AIEditTask)

\\ /

[ Shared Prompt Memory Sync ]

Each platform handles local execution, but prompt intent and reply structure stay consistent.

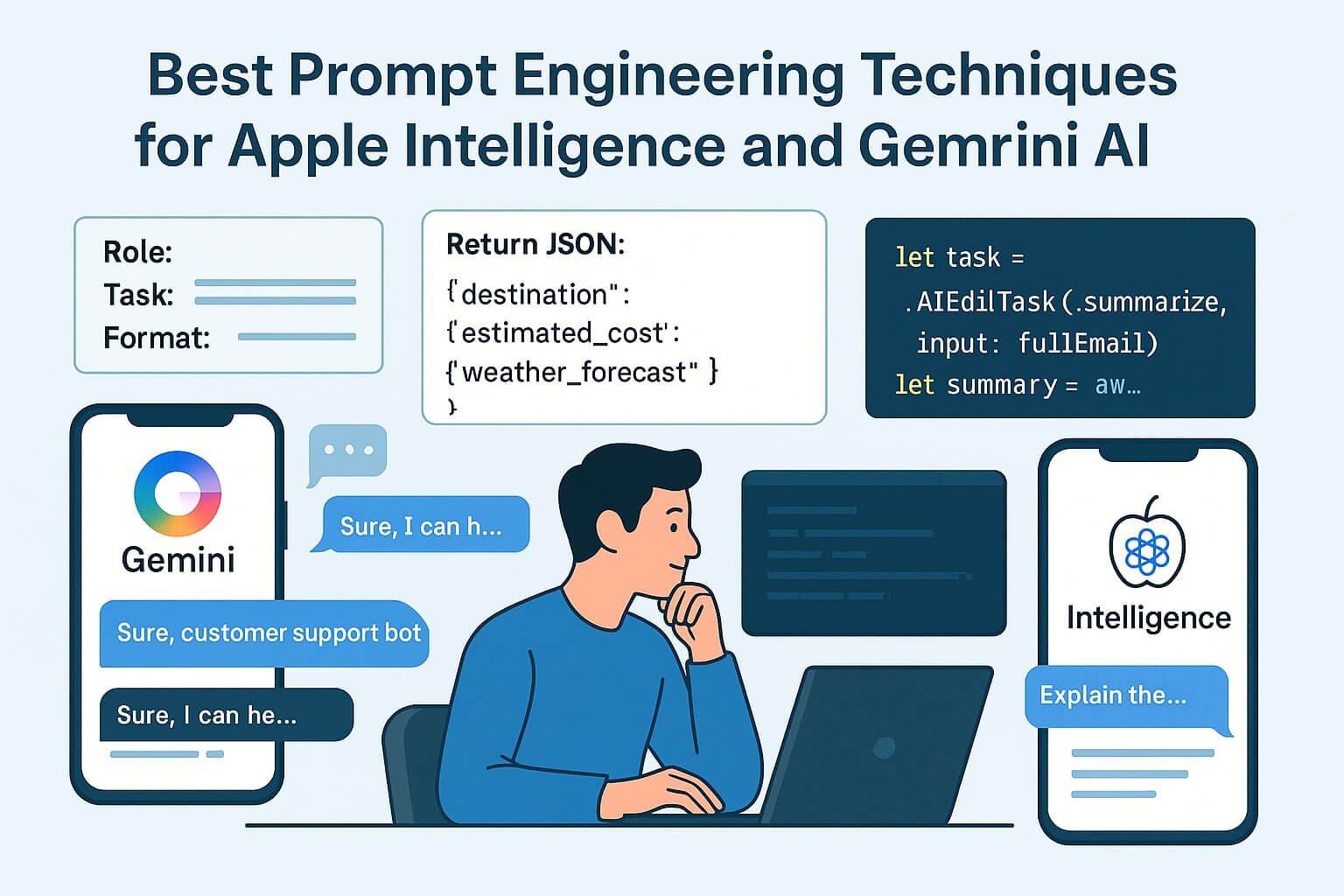

🧠 Defining Shared Prompt Intents

Create a common schema:

{

"intent": "TRAVEL_PLANNER",

"data": {

"destination": "Kerala",

"duration": "3 days",

"budget": "INR 10,000"

}

}

Each platform converts this into its native format:

Apple Swift (AIEditTask)

let prompt = """

You are a travel assistant. Suggest a 3-day trip to Kerala under ₹10,000.

"""

let result = await AppleIntelligence.perform(AIEditTask(.generate, input: prompt))

Android Kotlin (Gemini)

val result = session.prompt("Suggest a 3-day trip to Kerala under ₹10,000.")

🔄 Synchronizing Memory & State

Use Firestore, Supabase, or Realm to store:

- Session ID

- User preferences

- Prompt history

- Previous assistant decisions

Send current state to both Apple and Android views for seamless cross-device experience.

🧩 Kotlin Multiplatform + Swift Interop

Use shared business logic for agents in Kotlin Multiplatform Mobile (KMM) to export common logic to iOS:

// KMM prompt formatter

fun formatTravelPrompt(data: TravelRequest): String {

return "Plan a ${data.duration} trip to ${data.destination} under ${data.budget}"

}

🎨 UI Parity Tips

- Use SwiftUI’s glass-like cards and Compose’s Material3 Blur for parity

- Stick to rounded layouts, dynamic spacing, and minimum-scale text

- Design chat bubbles with equal line spacing and vertical rhythm

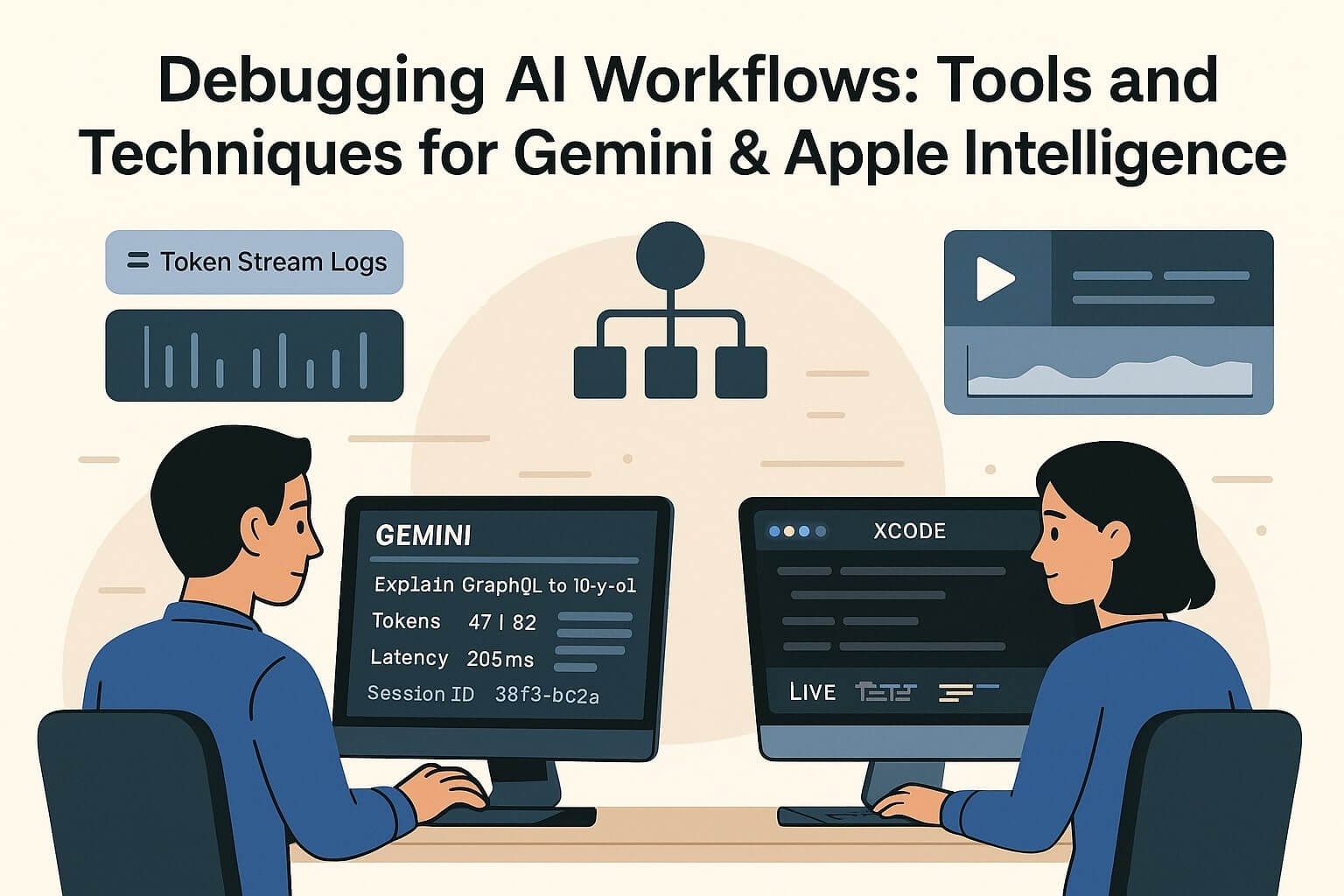

🔍 Debugging and Logs

- Gemini: Use Gemini Debug Console and PromptSession trace

- Apple: Xcode AI Profiler + LiveContext logs

Normalize logs across both by writing JSON wrappers and pushing to Firebase or Sentry.

🔐 Privacy Considerations

- Store session data locally with user opt-in for cloud sync

- Mark cloud-offloaded prompts (on-device → server fallback)

- Provide export history button with logs + summaries

✅ Summary

Building shared AI experiences across platforms isn’t about using the same LLM — it’s about building consistent UX, logic, and memory across SDKs.